Unless you take no steps to correct your actions.

In this article we will look at the two common penalties – manual and algorithmic – and how to identify them, before looking at two ways to recover from penalties using methods that (if maintained) can prevent further penalties in the future.

Were We Hit By a Penalty?

Determining whether or not your site has been hit depends on the type of penalty: either manual or algorithmic. Manual penalties are imposed by Google’s webspam team, while algorithmic penalties follow a change to any of Google’s indexing processes, and require more effort to identify.

Identifying Manual Penalties

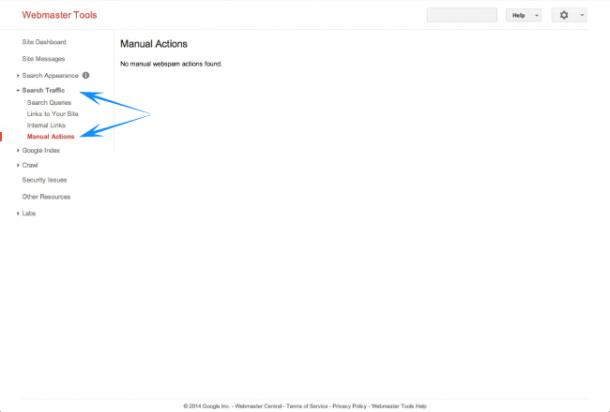

In the past manual penalties were communicated via email, assuming you had activated Google Webmaster Tools. In the 3rd quarter of 2013, Google added a Manual Actions tab to Webmaster Tools, making it even easier for site operators to monitor for manual penalties.

The Manual Actions tab can be found under the Search Traffic menu in the left sidebar of your Webmaster Tools account. Any communications relating to penalties will appear here, along with a Request a review link, although this should only ever be clicked once you have analysed the action(s) and corrected any issues. There are two types of actions:

- Site-Wide Matches – these are actions that affect your entire site, and as such they should be addressed first.

- Partial Matches – these are actions that only affect specific URLs, pages or links. However, Google will only list the first 1,000 URLs, which is important to remember.

The reasons for a manual action include the following:

- Unnatural links to (or from) your site

- Hacked site

- Thin content

- Pure spam

- User-generated spam

- Cloaking and/or sneaky redirects

- Hidden text and/or keyword stuffing

- Spammy freehosts

- Spammy structured markup

Google’s support pages for Webmaster Tools lists all of these reasons, along with a detailed explanation of what they mean and any recommended action you can take.

Identifying Algorithmic Penalties

Algorithmic penalties are more difficult to identify since they aren’t accompanied by a handy notification. The first indication that you may have been affected by an algorithmic penalty is a noticeable drop in your search traffic. Use Algoroo to look for any significant spikes in the Google algorithm. With Algoroo you can go back as far as December 2012. It is important to read up on any major updates, and minor ones if they get any coverage, because it can help you in your recovery efforts. Although the Panda algorithm (the one that measures the value of your content) has matured to a point where Google has stopped announcing every single change to it, major changes such as the May 2014 update are still communicated in some detail. Penguin (the algorithm that assesses link quality, and authenticity) is still young, and Google does try to be more open about changes to this algorithm.

Once you are equipped with more knowledge about what algorithmic changes have affected your site, you can start working on recovering. This will either involve cleaning up backlinks, or improving your site content; two tasks that should have become part of your overall SEO strategy some two years ago.

Disavowing Links

Many of the reasons for a penalty relate to behaviour that you have direct control over, from the quality of your content, through to the site structure, markup and outbound links. The opposite is true when it comes to inbound links, with your control being somewhat flimsy. Part of your SEO strategy should involve the monitoring of backlinks using your preferred analytics suite, extending to assessing the quality of each link. This task is manageable, albeit tedious, when you have less than 100 inbound links to grade; becoming more laborious, and less manageable, as you start attracting hundreds, if not thousands, of inbound links.

Time is critical when your site has been hit with a penalty, so using link research tools can help speed up the process. Unfortunately they do not replace the need for a manual review of your inbound links, they should only be used to help you sort inbound links into high-priority (toxic links), medium-priority (suspicious links) and low-priority (all other links).

Once you have identified (and confirmed) the toxic links – the inbound links that have most likely resulted in a penalty for your website – you can move onto the next stage.

Which is not the disavow links tool.

The next stage is to contact the webmaster or site operator of each of these toxic websites, and ask them to remove any link(s) to your website. This extra step is not the work of a sadistic mind; the disavow links tool does not remove links to your website, it merely instructs Google to ignore specific inbound links when assessing your site. And the next time you pull a backlinks report for your website, all of these toxic links are still going to show up, making it more difficult for you to quickly analyse inbound links. It is only after you have attempted to have unwanted links removed that the disavow links tool should be used.

Using the disavow links tool is relatively simple, but does require some effort on your part in compiling the file you will submit. Any mistakes you make in the file can result in the entire list being rejected, or worse yet, perfectly valid links being ignored. Ensure the following when compiling your list:

- It should be a plain text file (.txt), encoded in UTF-8 or 7-bit ASCII

- Each line should contain a single link or domain, never multiple links/domains

- If you want an entire domain ignored you need to precede the domain name with domain:, resulting in domain:shadyseo.com

- If it is a specific URL/page, you type out the full URL, for example, http://www.shadyseo.com/somecontent.htm

- Include relevant comments, with # at the beginning of each line of comments

Google does provide a good example illustrating all of the above

# example.com removed most links, but missed these http://spam.example.com/stuff/comments.html http://spam.example.com/stuff/paid-links.html # Contacted owner of shadyseo.com on 7/1/2012 to # ask for link removal but got no response domain:shadyseo.com

After compiling your list, and checking it again to ensure you haven’t made any mistakes and that it only includes links and domains you want ignored, you can upload it via the disavow links tool page. You should also note the following when uploading a file to the disavow links tool:

- Uploading a file replaces any you have previously uploaded, and

- It does take some time for the request to be processed, so don’t expect to see any immediate changes.

Future-Proofing Your Website

As the Internet has matured, we have moved from accepting almost any website to now (mostly) only accepting websites that are informative and up-to-date. This requires a considerable commitment from site operators, not only in terms of capital, but also in terms of time. One way of managing this commitment is to future-proof your website; to update and maintain it in such a way that any future changes to search and indexing algorithms don’t impact it too negatively.

This is no easy task, since the major search engines are constantly having to update their algorithms to always serve the most relevant content, and to reduce the impact of questionable SEO practices. Not all SEO practices are questionable, but there will always be a handful of individuals trying to game the system. The best way of doing this is to always follow white hat SEO techniques, and to have excellent, and up-to-date, knowledge of the major search engines guidelines.

The introduction of Panda has also resulted in site operators having to pay close attention to the content of their website, with quality superseding quantity. This, in turn, has led to the rapid growth of content marketing articles and service providers, to the point where is does begin to resemble a bubble about to burst. While the amount of chatter around content marketing, and the number of service providers, will eventually settle, the need for quality content will not disappear.

What constitutes white hat SEO may change over time, but what constitutes quality content won’t – even though quality is highly subjective. Simply stated:

Quality content informs, educates and/or entertains people (your audience).

The better it is at doing that, the more likely it is to be shared, quoted and linked to, even though this is not the primary objective of quality content. And the content is always written for an audience of real people, not search engine algorithms.

Distilled down to bullet points, future-proofing your website is about:

- Always following current white hat SEO, and

- Always investing in quality content; content that answers your audience’s questions, and provides solutions to their problems.

Conclusion

As you would have learned from the above points, identifying penalties affecting your website are not too difficult, even if they do require some investigative work on your part. The path to recovery, however, is not always as easy and requires a lot more effort, and some patience.

Sometimes the results of your labour can be seen within days, while sometimes it can take weeks before you see any positive changes.